Feed: Astral Codex Ten

I hate the term “hallucinations” for when AIs say false things. It’s perfectly calculated to mislead the reader - to make them think AIs are crazy, or maybe just have incomprehensible failure modes.

AIs say false things for the same reason you do.

At least, I did. In school, I would take multiple choice tests. When I didn’t know the answer to a question, I would guess. Schoolchild urban legend said that “C” was the best bet, so I would fill in bubble C. It was fine. Probably got a couple extra points that way, maybe raised my GPA by 0.1 over the counterfactual.

Some kids never guessed. They thought it was dishonest. I had trouble understanding them, but when I think back on it, I had limits too. I would guess on multiple choice questions, but never the short answer section. “Who invented the cotton gin?” For any “who invented” question in US History, there’s a 10% chance it’s Thomas Edison. Still, I never put down his name. “Who negotiated the purchase of southern Arizona from Mexico?” The most common name in the United States has long been “John Smith”, applying to 1/10,000 individuals. An 0.01% chance of getting a question right is better than zero, right? If I’d guessed “John Smith” for every short answer question I didn’t know, I might have gotten ~1 extra point in my school career, with no downside.

You can go further. Consider an essay question: “Describe the invention of the cotton gin and its effect on American history, citing your sources.” Suppose I slept when I should have studied and knew nothing about this. A one-in-a-million chance of getting it correct is better than literally zero, right?

The cotton gin was invented by Thomas Edison in 1910. It was important because gin made with cotton, of which the Southern plantation economy produced a surplus, was cheaper than the usual gin made with juniper berries. This lowered the price of alcoholic spirits considerably. According to historian John Smith in his seminal The Invention Of The Cotton Gin For Dummies, the resulting boom in alcoholism provoked a backlash that ultimately led to Prohibition.

I won’t say no human has ever done this, because I remember one kid doing it during a presentation in twelfth grade. It was so embarrassing (for him) that it remains seared in my memory - which sufficiently explains why most of us don’t try it. A one-in-a-million chance of a better grade isn’t worth the shame of a 999,999-in-a-million chance of sounding like an idiot.

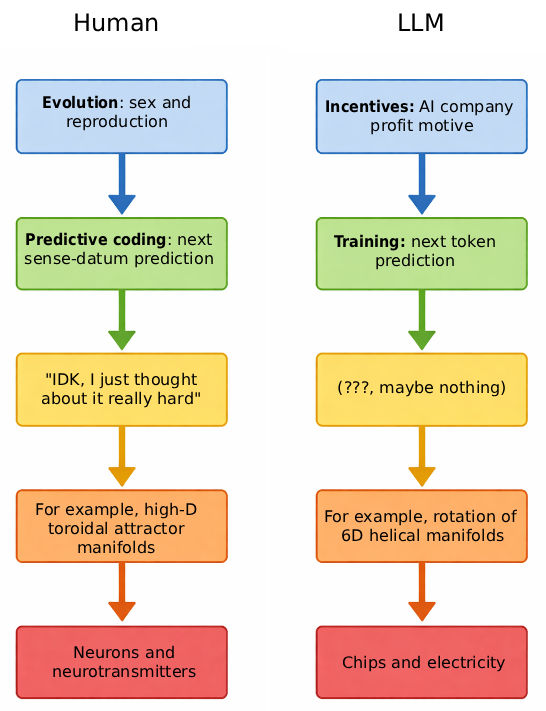

AIs have no shame. Their entire training process is based on guessing (the polite term is “prediction”). It goes like this:

AIs start with random weights, ie total chaos.

They’re asked to predict the next token in a text.

They give a random answer.

When they get it wrong, the training process slightly updates their weights towards the pattern that would have gotten it right.

After billions of tokens, their weights are in a good, nonrandom pattern that often predicts the next token successfully.

But even after step 5, they’re still guessing. Consider the following sentence: “I went out with my friend Mr. _______ “. With your human knowledge, you can predict that the token in the blank will be a surname. But you have no way to know which. If your life was on the line, you might guess “Smith”, since it’s the most common surname. Even the smartest AI can do little better.

And over the massive training process, even the craziest guesses sometimes pay off. Imagine you took one hundred trillion history classes. One in every million times you wrote a fake essay like the one above, your teacher said “Great job, that was exactly right, here’s a gold star.”

So the interesting question isn’t why AIs hallucinate: during training, guessing correctly is rewarded, guessing incorrectly isn’t punished, so the rational strategy is to always guess (and increase your chance of being right from 0 to 0.001%). Since AIs in normal consumer use follow the strategies they learned during training, they guess there too. The interesting question is why AIs sometimes don’t hallucinate. Here the answer is that the AI starts out hallucinating 100% of the time, the AI companies do things during post-training to bring that number down, and eventually they reduce it to “acceptable” levels and release it to users.

How do we know this is what’s happening? When researchers observe an AI mid-hallucination, they see the model activates features related to deception - ie fails an AI lie detector test. The original title of this post was “Lies, Not Hallucinations” and I still like this framing - the AI knows what it’s doing, in the same way you’d know you were trying to pull one over on your teacher by writing a fake essay. But friends talked me out of the lie framing. The AI doesn’t have a better answer than “John Smith”. It’s giving its real best guess - while knowing that the chance it’s right is very small.

Why does this matter? I often see people in the stochastic parrot faction say that AIs can’t be doing anything like humans, because they have this bizarre inhuman failure mode, “hallucinations” which is incompatible with being a normal mind that has some idea what’s going on. Therefore, it must be some kind of blind pattern-matching algorithm. Calling them “shameless guesses” hammers in that the AI is doing something so human and natural that you probably did it yourself during your student days.

Understood correctly, this is a story about alignment. AIs are smart enough to understand the game they’re actually playing - the game of determining strategies that get reward during pretraining. We just haven’t figured out how to align their reward function (get a high score on the pretraining algorithm) with our own desires (provide useful advice). People will say with a straight face “I don’t worry about alignment because I’ve never seen any alignment failures . . . and also, all those crazy hallucinations prove AIs are too dumb to be dangerous.”

This is the weekly visible open thread. Post about anything you want, ask random questions, whatever. ACX has an unofficial subreddit, Discord, and bulletin board, and in-person meetups around the world. Most content is free, some is subscriber only; you can subscribe here. Also:

1: Another ACX Forecasting Contest winner has come forth and revealed themselves. Giacomo P is a statistics PhD working on Bayesian methods. He's looking for an academic job; if you are hiring, read more about him here. He also asks that any "law nerd" who reads this bet on his prediction markets about an upcoming Italian referendum , which will help him cast an informed vote next Sunday.

2: Some good responses to the post on the constitutional amendment about Giant Congress. In case you were wondering whether the reversed meaning in the amendment was really a typo, commenter i_eat_pork tracked down the history, and yeah, definitely a typo. And commenter Caral found that the amendment might have been passed by an extra state in 1790, and therefore should be considered ratified - but DC was never informed, and there’s no clear way to tell the legal system “hey, there’s a amendment you don’t know about which should legally be in effect”. A job for an enterprising constitutional lawyer?

3: Some ACX readers wish me to advertise that they’ve started Nectome, a revolutionary new cryonics company (ie preserve your dead body intact in case the future learns how to revive people). They write:

We preserve the whole body, including the brain, at nanoscale, subsynaptic detail. We are capable of preserving every neuron and every synapse in the brain, and almost every protein, lipid, and nucleic acid within each cell and throughout the entire body is held in place by molecular crosslinks…unlike previous cryonics methods that required extremely low-temperature liquid nitrogen coolant, our method is stable for months at room temperature and compatible with traditional funeral practices.

More information here, and they have a pre-sale (at $100,000 per body) going on until the end of April.

4: New subscribers-only post, Lines Composed In A Fake Sequoia Forest. If you see a beautiful photo, and later learn it was AI-generated, are you harmed? What is the harm?

There are ACX meetup groups all over the world. Lots of people are vaguely interested, but don’t try them out until I make a big deal about it on the blog. Some people who try meetups out realize they love ACX meetups and start going regularly. Since learning that, I’ve tried to make a big deal about it on the blog twice annually, and it’s that time of year again.

If you’re willing to organize a meetup for your city please fill out the organizer form by March 26th.

The form will ask you to pick a location, time, and date, and to provide an email address where people can reach you for questions. It will also ask a few short questions about how excited you are to run the meetup to help pick between multiple organizers in the same city. One meetup per city will be advertised on the blog, and people can get in touch with you about details or just show up.

Organizing an ACX Everywhere meetup can be easy. Pick a time and a place (parks work well if you think there will be a lot of people, cafes or apartments work fine for fewer) and show up with a sign saying “ACX Meetup.” You don’t need to have discussion plans or a group activity. If you want to make the experience better for people, you can bring nice things like nametags, food and drinks, or games. Meetups Czar Skyler can reimburse you for the nametags, food, drinks, and other things like that, though reimbursements are likely going to go out slower than last year.

Here’s a short FAQ for potential meetup organizers:

1. How do I know if I would be a good meetup organizer?

If you can put a name/time/date in a box on Google Forms and show up there, you have the minimum skill necessary to be a meetup organizer for your city, and I recommend you volunteer.

Don’t worry, you volunteering won’t take the job away from someone more deserving. The form will ask people how excited/qualified they are about being an organizer, and if there are many options, I’ll choose between them. (Or Meetups Czar Skyler will.) But a lot of cities might not have an excited/qualified person, in which case I would rather the unexcited/unqualified people sign up, than have nobody available at all. If you are the leader of your city’s existing meetup group, please fill in the form anyway and say so. That lets me know you’re still active, and also importantly lets me know when your meetup is planned for.

This spreadsheet shows the cities where someone has filled out the form, updated manually after checking it makes sense. If you don’t see your city listed, either nobody has yet signed up or they did it recently after the last check. Beware the Bystander Effect!

2. How will people hear about the meetup?

You give me the information, and on March 27th (or so), I’ll post it on ACX. An event will also be created on LessWrong’s Community page.

3. When should I plan the meetup for?

Since I’ll post the list of meetup times and dates around March 27th, please choose sometime after that. Any day April 1st through May 31st is okay. Weekends are usually good, since it’s when most people are available. You’ll probably get more attendance if you schedule for at least one week out, but not so far out that people will forget - so mid April or early May would be best. If you’re in a college town, it might be worth checking the local graduation dates and avoiding those.

4. How many people should I expect?

Historically these meetups get anywhere from zero to over a hundred. Meetups in big US cities (especially ones with universities or tech hubs) had the most people; meetups in non-English-speaking countries had the fewest. You can see a list of every city and how many attendees most of them had last time here. Plan accordingly. If it looks like your city probably won’t have many attendees, maybe bring a friend or a book so you’ll have a good time even if nobody shows up.

5. Where should I hold the meetup?

A good venue should be easy for people to get to, not too loud, and have basic things like places to sit, access to toilets, and the option of acquiring food and water. City parks and mall common areas work well. If you want to hold the meetup at your house, remember that this will involve me posting your address on the Internet. If you want to hold the meetup at a pub or bar, remember that college students or parents with children who want to attend might not be able to get in.

6. What should I do at the meetup?

Mostly people just show up and talk. If you’re worried about this not going well, here are some things that can help:

Have people indicate topics they’re interested in by writing something on their nametag.

Write some icebreakers / conversation starters on index cards (e.g. “What have you been excited about recently?” or “How did you find the blog?” or “How many feet of giraffe neck do you think there are in the world?”) and leave them lying around to start discussions.

Say hello to people as they arrive and introduce yourself.

In general I would warn against trying to impose mandatory activities (e.g. “now we’re all going to sit down and watch a PowerPoint presentation”), but it’s fine to give people the option to do something other than freeform socializing (e.g. “go over to that table if you want to play a game”).

7. Is it okay if I already have an existing meetup group?

Yes. If you run an existing ACX meetup group, just choose one of your meetings which you’d like me to advertise on my blog as the official meetup for your city, and be prepared to have a larger-than-normal attendance who might want to do generic-new-people things that day.

If you’re a LW, EA, or other affiliated community meetup group, consider carefully whether you want to be affiliated with ACX. If you decide yes, that’s fine, but I might still choose an ACX-specific meetup over you, if I find one. I guess this would depend on whether you’re primarily a social group (good for this purpose) vs. a practical group that does rationality/altruism/etc activism (good for you, but not really appropriate for what I’m trying to do here). I’ll ask about this on the form.

8. If this works, am I committing to continuing to organize meetup groups forever for my city?

The short answer is no.

The long answer is no, but it seems like the sort of thing somebody should do. Many cities already have permanent meetup groups. For the others, I’ll prioritize would-be organizers who are interested in starting one. If you end up organizing one meetup but not being interested in starting a longer-term group, see if you can find someone at the meetup who you can hand this responsibility off to.

I know it sounds weird, but due to the way human psychology works, once you’re the meetup organizer people are going to respect you, coordinate around you, and be wary of doing anything on their own initiative lest they step on your toes. If you can just bang something loudly at the meetup, get everyone’s attention, and say “HEY, ANYONE WANT TO BECOME A REGULAR MEETUP ORGANIZER?”, somebody might say yes, even if they would never dream of asking you on their own and wouldn’t have decided to run things without someone offering.

If someone does want to run things regularly, you or they can offer to collect people’s names and emails if they’re interested in future meetups. You could do this with a pen and paper, or if you’re concerned about reading people’s handwriting, you could use a QR code/bitly link to a Google Form.

9. Are you (Scott) going to come to some of the meetups?

I have in the past, but this year I’ll probably only be able to make my local one in Berkeley.

10. What if I have other questions?

Skyler and I will read the comments here.

Again, you can find the meetup organizer volunteer form here. If you want to know if anyone has signed up to run a meetup for your city, you can view that here. Everyone else, just wait until around 3/27 and I’ll give you more information on where to go then.

[This is a guest post, written by David Speiser, author of the Ollantay review in last year’s Non-Book Review contest. David provided the concept and original draft; Scott edited the final version. Remaining mistakes are likely mine (Scott’s)]

The Problem

Everyone hates Congress. That poll showing that cockroaches are more popular than Congress is now thirteen years old, and things haven’t improved in those thirteen years. Congressional approval dipped below 20% during the Great Recession and hasn’t recovered since.

A republic where a supermajority of citizens neither like nor trust their representatives is not the most stable of foundations, so it should not be shocking that the legislative branch is being subsumed by the executive.

What’s the solution? Many have been proposed, some with very snazzy websites. FairVote thinks that ranked choice voting and proportional representation will solve it. The Congressional Reform Project has another snazzy website with such bold proposals as “Increase the opportunity for Members to form relationships across party lines, including by bipartisan issues conferences.” There are more think tanks. They want to enlarge the House by a few hundred members, switch to a biennial budget system, spend more on Congressional staffers, and introduce term limits, among many other suggestions.

There are op-eds too. Here’s how the Atlantic wants to fix Congress. The New York Times of course has a solution. Here on Substack, Matt Yglesias thinks proportional representation is the solution, and Nicholas Decker has an especially interesting solution.

These proposals, no matter which direction they’re coming from, have two things in common. The first is that they largely agree on the problem: members of Congress are disconnected from their constituents. Thanks to a combination of huge gerrymandered districts, national partisan polarization, and the influence of large donors, a representative has little incentive to care about the experience of individual people in their district.

The second thing that all these proposed solutions have in common is that none of them will ever be implemented. They all involve acts of Congress - and members of Congress have no incentive to vote to change broken systems that currently benefit them. Why would you want to stop gerrymandering when it’s the reason you don’t have to run a real campaign to stay in office? Why would you vote to give yourself more work? Why would you vote to make it harder for people to give you money? If we want to fix Congress, we need a solution that doesn’t involve Congress.

Luckily for us, such a solution exists: if we get 27 states to ratify the Congressional Apportionment Amendment, then we can make some real progress towards fixing Congress without Congressional buy-in. This solution is not a new idea. It comes up every few years and gets little traction. My hope in writing this piece is that it gets more traction now.

The Only A+ Ever Given At The University Of Texas

In 1789, Congress passed the Bill of Rights, containing twelve Constitutional amendments meant to protect the American people. Ten of these twelve were ratified by the states and became law. Two failed and were forgotten.

Eighty three years later - in 1872 - a Congress voted themselves a pay raise1. In fact, they voted themselves a pay raise effective as of two years ago, meaning that every member of Congress immediately received two years of back pay.

The American people were outraged, especially after an economic crisis hit later that year. In the midst of the backlash, a member of the Ohio state legislature remembered the failed eleventh amendment in the Bill of Rights, which read:

No law, varying the compensation for the services of the Senators and Representatives, shall take effect, until an election of Representatives shall have intervened.

In other words, if Congress votes themselves a pay raise, it can’t take effect before the next election cycle. Ohio decided - better late than never - and became the 9th state to ratify the amendment, almost a century after the first eight. But it still wasn’t enough, and besides, the American people punished Congress in a more traditional way: they voted the Republican majority out of office and handed the chamber to the Democrats. Everyone forgot the eleventh amendment a second time.

One hundred ten years later - in 1982 - an undergrad at University of Texas in Austin wrote a paper on the pay-raise amendment, mentioning that there wasn’t technically anything in the Constitution that said that amendments had expiration dates. He got a C on the paper and very reasonably turned that into a decade-long crusade to prove his history teacher wrong. He started a nationwide campaign to get state legislatures to ratify the amendment. In 1992, he succeeded: the 38th state approved the provision, and it was added to the Constitution as what is now the Twenty-Seventh Amendment. The crusade worked; thirty-four years after the original paper, his political science teacher submitted a petition to the university to retroactively change his grade to an A+; since there is no A+ on the official UT grading rubric, this became the only A+ ever given in the history of the University of Texas.

That means eleven of the original twelve Bill of Rights amendments have made it into the Constitution. There’s only one left. It’s been ratified by eleven states already. If twenty-seven more states agree, it will become the law of the land. It is the right to Giant Congress.

The Right To Giant Congress

Here is the text of the Congressional Apportionment Amendment, the sole unratified amendment from the Bill of Rights:

After the first enumeration required by the first article of the Constitution, there shall be one Representative for every thirty thousand, until the number shall amount to one hundred, after which the proportion shall be so regulated by Congress, that there shall be not less than one hundred Representatives, nor less than one Representative for every forty thousand persons, until the number of Representatives shall amount to two hundred, after which the proportion shall be so regulated by Congress, that there shall not be less than two hundred Representatives, nor more than one Representative for every fifty thousand persons.

In other words, there will be one Representative per X people, depending on the size of the US. Once the US is big enough, it will top out at one Representative per 50,000 citizens.

(if you’ve noticed something off about this description, good work - we’ll cover it in the section “A Troublesome Typo”, near the end)

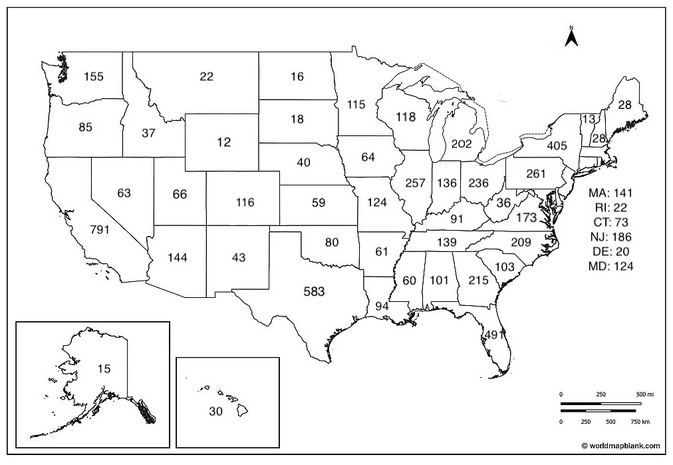

The US is far bigger than in the Framers’ time, so it’s the 50,000 number that would apply in the present day. This would increase the size of the House of Representatives from 435 reps to 6,6412. Wyoming would have 12 seats; California would have 791. Here’s a map:

This would give the U.S. the largest legislature in the world, topping the 2,904-member National People’s Congress of China. It would land us right about the middle of the list of citizens per representative, at #104, right between Hungary and Qatar (we currently sit at #3, right between Afghanistan and Pakistan).

Would this solve the issues that make Congress so hated? It would be a step in the right direction. Our various think tanks identified three primary reasons behind the estrangement of Congress and citizens: gerrymandering, national partisan polarization, and the influence of large donors. This fixes, or at least ameliorates, all of them.

Gerrymandering: Gerrymandering many small districts is a harder problem than gerrymandering a few big ones. Durable gerrymandering requires drawing districts with the exact right combination of cities and rural areas, but there are only a limited number of each per state. With too many districts, achievable margins decrease and the gerrymander is more likely to fail.

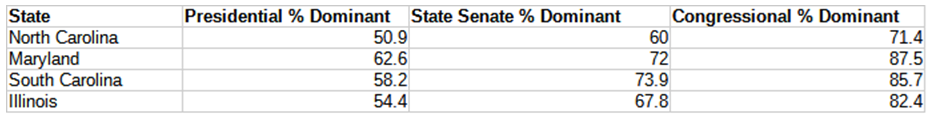

We can see this with state legislatures vs. congressional delegations. A dominant party has equal incentive to gerrymander each, but most states have more legislature seats than Congressional ones, and so the legislatures end up less gerrymandered. Here are some real numbers from last election cycle3:

So for example, in Republican-dominated North Carolina, 50.9% of people voted Trump, 60% of state senate seats are held by Republicans, and 71.4% of their House seats belong to Republicans. The state senate (50 seats) is only half as gerrymandered as the House delegation (14 seats).

In many states, the new CAA-compliant delegation would be about the same size as the state legislature, and so could also be expected to halve gerrymandering.

As a bonus, the Electoral College bias towards small states would be essentially solved. Currently, a Wyomingite’s presidential vote controls three times as many electoral votes as a Californian’s. Under the CAA, both states would be about equal.

Money: This one is intuitive. If you can effectively buy 1/435 elections, you’ve bought 0.23% of Congress. If the same money only buys you 0.02% of Congress, you’re less incentivized to try to buy House elections and more incentivized to try to buy Senate seats or just to gain influence within a given political party. Money in politics is still a thing, but it becomes much harder to coordinate among people. This makes it easier for somebody to run for Congress without having to fundraise millions of dollars. Because it’s less worth it to spend so much money on any one seat, elections to the House become cheaper4.

Polarization: Some of the think tanks that want to increase the size of Congress by a few hundred members rather than a few thousand claim that this increase will fix political polarization by making representatives more answerable to their constituents who tend to care more about local issues than national ones.

I’m more skeptical of this claim, mainly because it seems that all politics is national politics now. There’s one newspaper and three websites and all they care about is national politics. My Congressional representative ran for office touting her background in energy conservation and water management, arguing that in a drying state and a warming climate we really need somebody in Congress who knows water problems inside and out. Now that she’s actually in Congress, it seems that her main job is calling Donald Trump a pedophile5. The incentives here are to get noticed by the press and to go viral talking about how evil the other side is, so that people who are angry at the evil other side will give you money and you can win your next election.

But maybe Big Congress can solve that. Maybe in a district of less than 50,000 there will be less incentive to go viral and more incentive to connect with your constituents. At the very least, it seems that people trust their state representatives more. And when my state representative and my state Senator tell me about the good work that they’ve done and ask for me to vote for them again, they point to legislation that they’ve passed, not clips of them calling their opponents pedophiles.

Won’t Congress Become Unmanageable?

At first, probably yes!

The Capitol Building couldn’t fit a 6,641 person Congress, let alone all of the extra staffers and administrative personnel who would come with it. We’d need to build a new monument to the largest democratic body in the history of the world. This is a good thing.

But it would also become conceptually unmanageable, with individual members having more trouble networking with one another and sounding out consensus. I expect that out of necessity, the House would take on a more parliamentary form with the party as the baseline for decision making. Then the big negotiations become those between parties, not between individuals.

Why Should I Support This?

Democrats: You’re about to take a beating in the next census. California is moving to gerrymander its Congressional delegation, but it’s also going to lose four seats. Texas is moving to gerrymander its delegation even more aggressively, and it’s going to gain four seats. Florida is going to gain three. Illinois and New York are losing seats. Across the board it’s bad news; while you might come out on top in this year’s elections, you’re going to lose the gerrymandering battle come 2030. Ratifying the CAA will make the battle that much fairer for you.

Republicans: You’re about to take a beating in the midterms. The aggressive gerrymandering in Texas could easily backfire in a blue year, and California just passed the “I Hate Republicans” act to gerrymander that state as well. Ratifying the CAA is a way to blunt the effect, and let your colleagues in Illinois and California and New England have their voices heard. But there’s a bigger reason for you to want to support this. If you’re a Republican in 2026, you exist to serve Donald Trump and his vision for America. You want to help Donald Trump recreate America in his image. The image of America will be the image of the new Capitol Building, and Donald Trump will lead this design. You saw how excited he was about the east wing of the White House; imagine how ecstatic he would be to get to design the Donald J. Trump Capitol Building. Imagine how owned all those Washington libs will be when they walk by the giant golden statue of Donald Trump that hosts Congress.

Libertarians/Communists/Greens/etc: Third parties are at their nadir right now. Zero state or national legislative seats are currently occupied by third parties, which is historically unusual. But increasing the size of Congress would give a shot in the arm to third parties. Getting 25,000 people to vote for you seems much more doable, especially if the whole party goes all-in on one seat. And it only takes one. I gotta believe that the Libertarians could win a Congressional seat in New Hampshire. The Communists could win one in Seattle. And once you get one seat, then it’s off to the races. Getting national recognition as one of 6,641 is really hard - joining or forming a third party is the kind of thing that gets you press. This is speculation, I have no data to back it up, but I fully expect that we would see a big upshot in third party representation and membership. The CAA is exactly what the Libertarians need to break out of their funk.

State legislators: Because you have an opportunity here. The most likely people to be elected to the new Big Congress are those who already have political experience and know what it takes to win an election in a small district. If you vote to ratify the CAA, odds are good that you’ll be among those elected to fill the ranks of Big Congress. And you’ve always wanted to be there in Washington. We both know it.

A Troublesome Typo

The second clause of the amendment describes the situation when the US population is between 3 million and 8 million. It says (my bolding):

There shall be not less than one hundred Representatives, nor less than one Representative for every forty thousand persons

Sounds reasonable enough. This is making the straightforward claim that there should be many representatives, and a high representative-to-constituent ratio.

The third clause of the amendment describes the situation when the US population is greater than 8 million people (i.e. the situation we’re in now). It says:

There shall not be less than two hundred Representatives, nor more than one Representative for every fifty thousand persons.

Notice the non-parallelism with the second clause. The second clause was two less-thans, meaning many representatives and low representative-to-constituent ratio. The third clause is a less-than followed by a more-than, meaning many representatives and a low representative-to-constituent ratio.

Aren’t these two goals - many representatives, and a low representative-to-constituent ratio - in tension?

Yes. In fact, the clause is mathematically impossible to satisfy at populations between eight and ten million. For example, with nine million Americans, we need at least two hundred representatives, but fewer than 9,000,000/50,000 = 180 representatives. Obviously there is no number which is both above 200 and less than 180, so this makes no sense.

At other population sizes, the clause does the opposite of what its founders intended, saying that the legislator-to-constituent ratio should be low and Congress has to be small. For example, at the current US population of 350 million, the clause merely says that Congress must be smaller than 6,641 representatives, meaning that the current Congress size is fine and nothing changes.

The simple explanation is that this is a typo. The people who wrote the law had three clauses, and meant to say “less than . . . less than” in each. But in the third clause, they said “less than . . . more than”. This has been noticed and acknowledged for over two hundred years.

So we have a potential Constitutional amendment which says the opposite of what it definitely means. If passed, this would set us up for a court case that directly pits the legal school of textualism (you need to follow the law as written) against originalism (you need to follow what the people who wrote the law meant). These two schools are often in oblique and complicated conflict. But as far as we know, they’ve never faced so direct a test as a section of the Constitution with an obvious-for-two-hundred-years typo that inverts its meaning. All the Supreme Court Justices who have previously gotten away with talking about how the law is subtle and complicated would have to finally just decide whether textualism or originalism is right, no-take-backs, once and for all. It would be hilarious.

The most likely outcome would be that they would bow to two hundred years of obvious criticism of this incorrectly-worded law, agree that it meant to say that the legislator-to-constituent ratio must be high, and we would get Giant Congress.

But there’s a remote chance that the textualists would win after all. This wouldn’t make things worse - Congress would be constitutionally banned from having more than 6,641 representatives, but this was hardly in the cards anyway. It would also mean that if the US population ever declined to between eight and ten million - admittedly another thing that’s not really in the cards - the Constitution would become logically impossible to follow, and America would officially be a paradox. If the population ever declined to between eight and ten million people, this probably would not be our biggest problem. But it might be the funniest.

The Path To 38

A constitutional amendment must be ratified by 3/4 of states; that’s 38/50. Eleven have ratified it already, so we need 27 more. Of the 39 states that have not ratified the CAA, 13 have legislatures run by Democrats and 25 have legislatures run by Republicans. This has to be a bipartisan effort.

But it’s no worse than the situation with the Twenty-Seventh Amendment. Gregory Watson, the previously mentioned Texas undergraduate, got it passed with $6,000 of his own money and a very dedicated letter-writing campaign. The Congressional Apportionment Amendment may require more work, but the precedent is there.

If you’re a state legislator, or if you know a state legislator, or if you want to be a state legislator after they all move up to Washington, then please introduce a motion to ratify this amendment. And tell all your colleagues that, if they ratify it too, they’ll get to be real Congressmen and Congresswomen. We can have the largest legislative body in the world. We can build monuments again. We can have real third parties again.

Either that, or we’ll turn the Constitution into a paradox and our government will vanish in a puff of logic. Still probably beats what’s going on now.

Of around $67k/year in 2026 dollars.

Under the 2020 census. The number would change upon each subsequent census. In 2030, it will probably be around 6,980.

In case this smacks of cherry-picking, here is a breakdown of the “error” in every state’s Congressional delegation, state house delegation, and state senate delegation. “Error” here is defined as the difference between the representation of each state’s delegation and the percentage of that state that voted for Trump over Harris (or vice versa). In only two states, Florida and Virginia, is the error greatest in the largest body, and both of those states would have Congressional delegations larger than that largest body. In the case of Florida, their delegation would be nearly quadruple the size of their state house.

There could also be an effect from the structure of the TV market. Stations sell ads by region, and each existing media region is larger than the new Congressional districts. So absent a change in market structure, a candidate who wanted to purchase TV advertising couldn’t target their own district easily; they would have to overpay to target a much larger region.

And just to harp on this more, we just blew by the Colorado River Compact agreement deadline and now the federal government is going to start mandating cuts; everybody’s going to sue everybody else. Lake Powell is quite possibly going to dead pool this year, and as far as I can find the congressperson who ran on water issues is saying nothing about it.

This is the weekly visible open thread. Post about anything you want, ask random questions, whatever. ACX has an unofficial subreddit, Discord, and bulletin board, and in-person meetups around the world. Most content is free, some is subscriber only; you can subscribe here. Also:

1: Mox asks me to advertise their 2026 fundraiser. They’re a rationalist/EA coworking space in San Francisco that hosts ACX meetups, ACX grants infrastructure, AI safety work, and more. And while I’m advertising them, they also offer deals on personal and organizational office space.

2: StopTheRace.ai will be holding a protest on Saturday, March 21 in front of major AI company offices, asking them to commit to a mutual pause (ie to stop AI research if every other AI company in the world agrees to do so). Demis Hassabis of Google DeepMind has already informally agreed to something like this in principle (which is why GDM isn’t being protested), and Anthropic has expressed interest but its new responsible scaling policy stops short of an explicit commitment. I think this is a reasonable ask, albeit so unlikely to happen that protests about it will probably do more to raise awareness than be a coherent plan in themselves. If you’re curious about the details of an AI pause, I expect to be able to provide more information in a few months.

3: ACX grantee Markus Englund announces a first set of results from his project to automate anomaly detection in scientific data, finding serious and reportable data issues in eighteen papers, including an influential study linking Parkinson’s to the gut. He plans to scale up his efforts by over an order of magnitude in the year ahead.

California lets interest groups propose measures for the state ballot. Anyone who gathers enough signatures (currently 874,641) can put their hare-brained plans before voters during the next election year.

This year, the big story is the 2026 Billionaire Tax Act, a 5% wealth tax on California’s billionaires. Your views on this will mostly be shaped by whether or not you like taxing the rich, but opponents have argued that it’s an especially poorly written proposal:

It includes a tax on “unrealized gains”, like a founder’s share of a private company which hasn’t been sold yet. This could be an existential threat to the Silicon Valley model of building startups that are worth billions on paper before their founders see any cash. Since most billionaires keep most of their wealth in stocks, any wealth tax will need some way to reach these (cf. complaints about the “buy, borrow, die” strategy for avoiding taxation). But there are better ways to do this (for example, taxing at liquidation and treating death as a virtual liquidation event), other wealth tax proposals have included these, and the California proposal doesn’t.

It appears to value company stakes by voting rights rather than ownership, so a typical founder who maintains control of their company despite dilution might see themselves taxed for more than they have. Garry Tan explains the math here with reference to Google. However, Current Affairs has a good article (?!) that pushes back, saying the proposal exempts public companies like Google. Although private companies would still be affected, this would be so obviously unfair that founders would easily win an exemption based on a provision allowing them to appeal nonsensical results. Still, some might counterobject that proposed legislation is generally supposed to be good, rather than so bad that its victims will easily win on appeal.

It’s retroactive, applying to billionaires who lived in California in January, even though it won’t come to a vote until November. Proponents argue that this is necessary to prevent billionaire flight; opponents point out that alternatively, billionaires could flee before the tax even passes (as some have already done). One plausible result is that the tax fails (either at the ballot box or the courts), but only after spurring California’s richest taxpayers to flee, leading to a net decrease in revenue.

Some people propose that it could decrease state revenues overall even if it passed, if it drove out enough billionaires, though others disagree.

Pro-tech-industry newsletter Pirate Wires finds that 20 out of 21 California tech billionaires interviewed were “developing an exit plan” and quotes an insider saying that “if this tax actually passes, I think the technology industry kind of has to leave the state”. Even Gavin Newsom, hardly known for being an anti-tax conservative, has argued that it “makes no sense” and “would be really damaging”.

The ACX legal and economic analysis team (Claude, GPT, and Gemini) doubt the direst warnings, but agree that the tax is of dubious value and its provisions poorly suited to Silicon Valley.

On one level, it’s no surprise that California, a state full of bad socialists, is considering bad socialist policy. But I think this is the wrong perspective. This proposition isn’t being sponsored by some generic group of Piketty-reading leftists. It’s the project of SEIU (Service Employees International Union) a union of mostly healthcare workers.

This immediately clarifies the debate about whether it’s net negative for revenue. 90% of the revenue from the tax is earmarked for health care. So even if it’s net negative for the state, it isn’t net negative for the health care budget in particular, ie for the people who are sponsoring the measure.

But we can get even more conspiratorial. The SEIU is known in California political circles for pioneering and perfecting the art of extortion via ballot initiative. Their usual strategy goes:

Propose a ballot initiative that will sound nice to voters, but which is actually deliberately designed to ruin some industry.

Demand concessions from that industry in exchange for withdrawing the initiative.

Their first extortion attempt (as far as I know) was the 2014 Fair Healthcare Pricing Act, which would have capped the amount hospitals were allowed to charge for procedures at some unsustainable amount. The hospital association seemed to think this was an existential threat:

If the initiatives are approved by the voters, hospitals could not operate as they do now. It would be necessary for hospitals to restructure their business model and services provided. Additionally, hospitals would be faced with unprecedented decisions — “Which services must be eliminated or cutback?”; “How can the hospital operate without departmental cross-subsidization?”; and “How can strategic planning be conducted in a world of oppression and uncertainty?”

Although the hospitals themselves might be biased, the government’s mandatory fiscal analysis of the initiative seemed to agree, saying that “about 20 hospitals would change from having positive operating margins to having operating losses before taking into account any strategies these hospitals might implement in response to the measure.”

But “help” was on the way. The SEIU offered to withdraw its initiative in exchange for a $100 million “donation” from hospital lobby groups to one of SEIU’s pet causes, plus the right to expand their union into the affected hospitals. The hospitals caved and gave them what they wanted. The union was surprisingly frank in their celebration:

[Union leader Dave] Regan said that the SEIU-UHW had spent $5 million on [backing the ballot initiatives], but that it paid off handsomely. “For a $5 million investment, we get an $80 million turn to pursue those things,” Regan said. He observed that the CHA would have spent as much as $100 million to defeat the initiatives.

Buoyed by their success, SEIU identified dialysis clinics as their next target, and demanded similar union expansion rights (I can’t find any information about whether they also wanted more cash). The dialysis clinics refused, and so began one of the most shameful chapters in California ballot history: The Eternal Kidney Proposition. SEIU proposed a 2018 ballot proposition to cap dialysis clinic revenues at some unsustainable level. The clinics spent $100 million fighting it, “the most money raised for a campaign like this in California history”, and it failed.

And then it was back! In 2020, SEIU proposed a new packet of regulations for dialysis clinics, all of which probably sounded reasonable to the average voter but which had the overall effect of making them ruinously expensive to operate. The measures were opposed by the California Medical Association (representing doctors), the American Nursing Association (representing nurses), various patients’ groups, and even the NAACP (black people are especially prone to kidney disease, and would be hardest hit). Once again, the clinics spent $100 million getting the message out, and the Californian public rejected it.

And then it was back again! In 2022, SEIU proposed basically the same packet of regulations. All the same groups lined up against, now joined by the Renal Physicians Association, the Renal Physician Assistants’ Association, the National Kidney Association, and various veterans groups (older veterans are also commonly affected by kidney disease, and would also be hard-hit). After wasting another $100 million, the proposition was defeated a third time.

Somewhere in this process, Californians started to wonder what was going on. One dialysis proposition might be happenstance, two might be coincidence, but three was enemy action. In 2020, media nonprofit CalMatters published Good Policy Or Ballot Blackmail?, trying to spread awareness of SEIU’s extortion attempts. It focuses on SEIU leader Dave Regan’s love of the tactic:

[SEIU] sponsored Proposition 23 on the November ballot, which would add new regulations for dialysis clinics. It put a similar measure before voters in 2018, which they rejected. In the last two elections, it’s also sponsored a measure to tax hospitals in the Los Angeles County city of Lynwood, and to cap prices at Stanford hospitals and clinics in several Bay Area Cities.

And that doesn’t count the many initiatives it began working on by collecting signatures but withdrew before they reached the ballot — including a minimum wage initiative in 2016, a pair of measures to limit hospital fees and executive pay in 2014, and two other initiatives to curb hospital bills and expand charity care in 2012.

All told, these campaigns have cost the union at least $43 million, and resulted in no wins on the ballot in California — though union president Dave Regan says they’ve helped make progress in other ways. The practice has earned him a reputation as an aggressive labor leader who uses the initiative process to needle adversaries in the health care profession as he tries to expand membership in his union.

“Dave Regan has made this into a strategy,” said Ken Jacobs, chair of the UC Berkeley Labor Center, which researches unions […]

And on the opinions of other labor leaders:

“There’s great resentment toward him because of his ‘my way or the highway’ kind of way of dealing with other folks,” said Sal Rosselli, who worked with Regan as part of the larger SEIU umbrella union for many years, but now heads the rival National Union of Healthcare Workers.

Regan’s frequent use of ballot measures is “dishonest with voters,” Rosselli said. “He’s not doing it to improve the quality of health care… He’s doing it to gain leverage over the employers for top-down organizing rights.”

Wall Street Journal agreed, and even the more liberal Los Angeles Times described SEIU’s work as “political extortion”.

Given that all of SEIU’s past progressive-sounding legislation has been thinly-disguised extortion attempts, might this one be as well?

The argument against: SEIU is entirely focused on healthcare and doesn’t care about the tech industry.

The argument in favor: Gavin Newsom cares about the tech industry. And SEIU cares about Gavin Newsom. Governor Newsom has been eyeing the Democratic presidential nomination in 2028. He needs a reputation as a Sensible Moderate and plenty of billionaire donors. And there’s a clear path to the latter - as Silicon Valley tires of Trump’s random acts of economic devastation, some tech leaders are starting to regret their flirtation with right-wing populism and wonder whether the other side has a better offer. If everything goes exactly right, he can make it work. Instead, there’s this wealth tax, coming at the worst possible time. Newsom really, really wants it to go away. So, Politico reports, he’s been meeting with SEIU leader Dave Regan to see what’s on offer:

Gavin Newsom and his staff have quietly talked to the champion of a controversial wealth tax proposal seeking an off-ramp to defuse a looming ballot measure fight.

The conversations, reported here for the first time, have occurred intermittently for months as SEIU-UHW’s ballot initiative targeting billionaires migrated from the backrooms of California politics to the center of a raging debate about Silicon Valley and income inequality, sparking tech titans’ wrath and vows to move out of state.

“We’ve been at this for four months,” Newsom said in an interview with POLITICO, describing an “all-hands” effort that has included him meeting one-on-one with SEIU-UHW’s leader, Dave Regan.

A compromise does not appear imminent. A union official cast doubt on the possibility of a deal, saying the two sides do not currently have another meeting scheduled and framing a ballot fight as an inevitability.

My read: rather than a heartfelt attempt at redistribution, this is a heads-I-win-tails-you-lose gambit by the SEIU. If Governor Newsom offers them enough concessions and bribes, they’ll drop the initiative. If not, they’ll carry it through, maybe win, and get billions of dollars of extra health care spending, some of which will flow through to their members. Either way, whatever happens to the rest of the state isn’t their concern.

One critique of capitalism argues that, although in theory it aligns incentives perfectly so that companies should produce things that people want, in practice it also incentivizes the hunt for loopholes: addictive products that can take advantage of seemingly-tiny wedges between what people will buy and what’s good for them. Cigarettes, casinos, payday loans, and social media all demonstrate that these wedges collectively form a multi-trillion dollar niche.

In the same way, SEIU seems to have found a bug in direct democracy: it incentivizes interest groups to search for the most destructive possible ballot initiative that might nevertheless get approved by low-information voters, since this gives them leverage over anyone willing to bribe them into withdrawing their poison pill. Seems like an ignominious end for California’s ballot proposition system.

The Wednesday open threads are usually paid-subscriber only, but I’m making this one public to give people more space to talk about everything going on. Also:

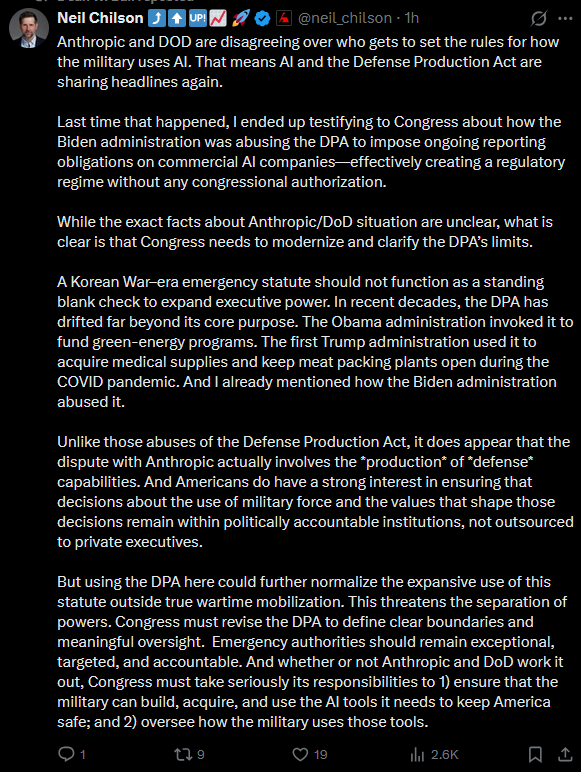

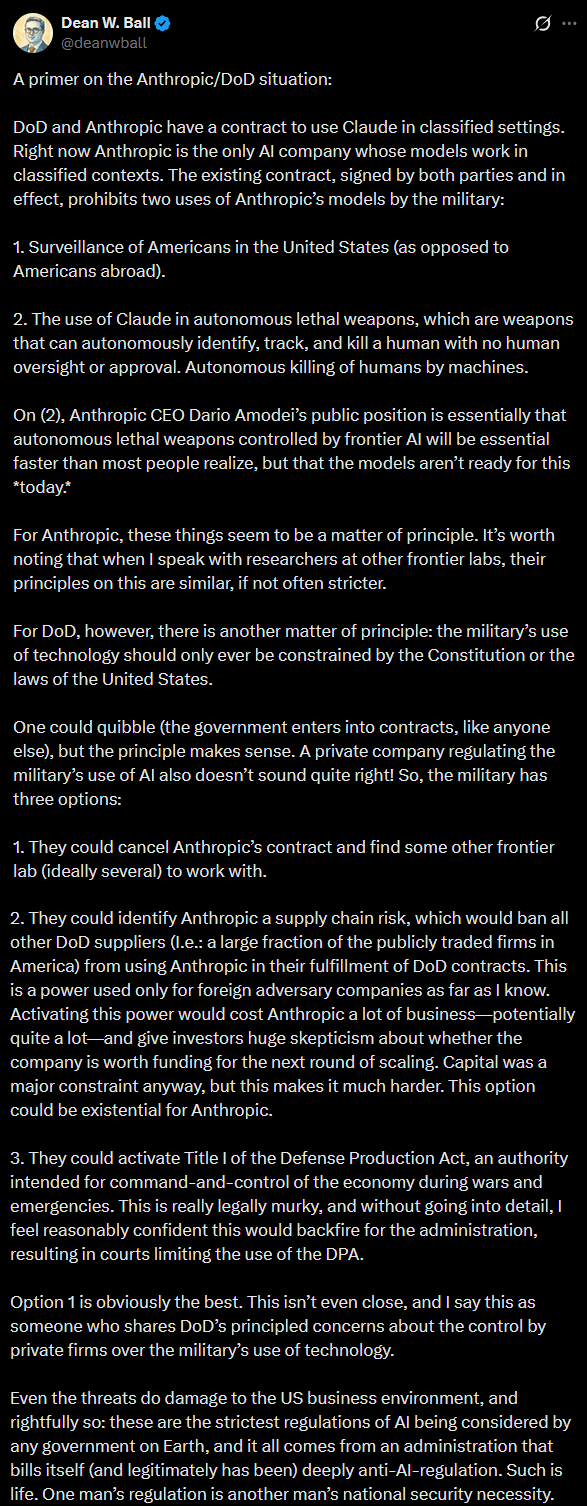

1: The OpenAI/Pentagon situation has evolved since Sunday’s ACX post (“All Lawful Use: Much More Than You Wanted To Know”). For up-to-date analysis of the latest contract, I endorse this LW post from today, on the newest contract: OpenAI’s Surveillance Language Has Many Potential Loopholes And They Can Do Better.

Having Your Own Government Try To Destroy You Is (At Least Temporarily) Good For Business

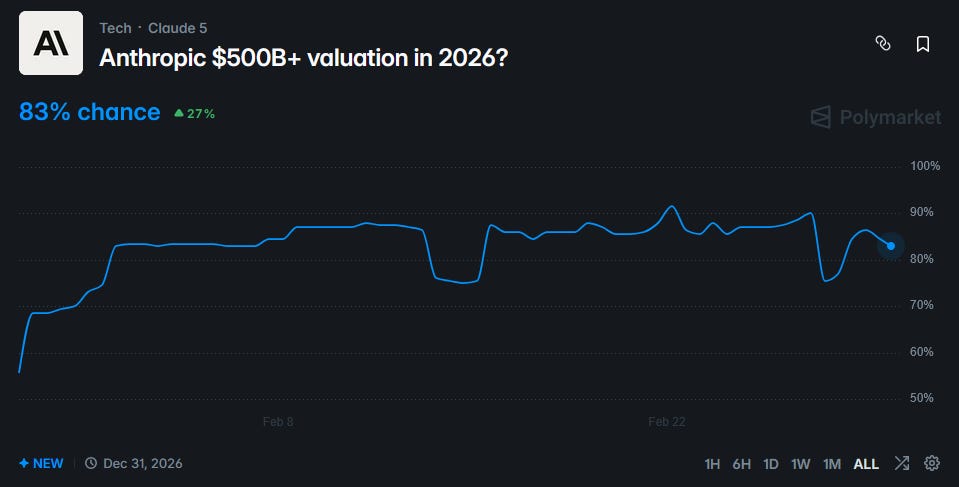

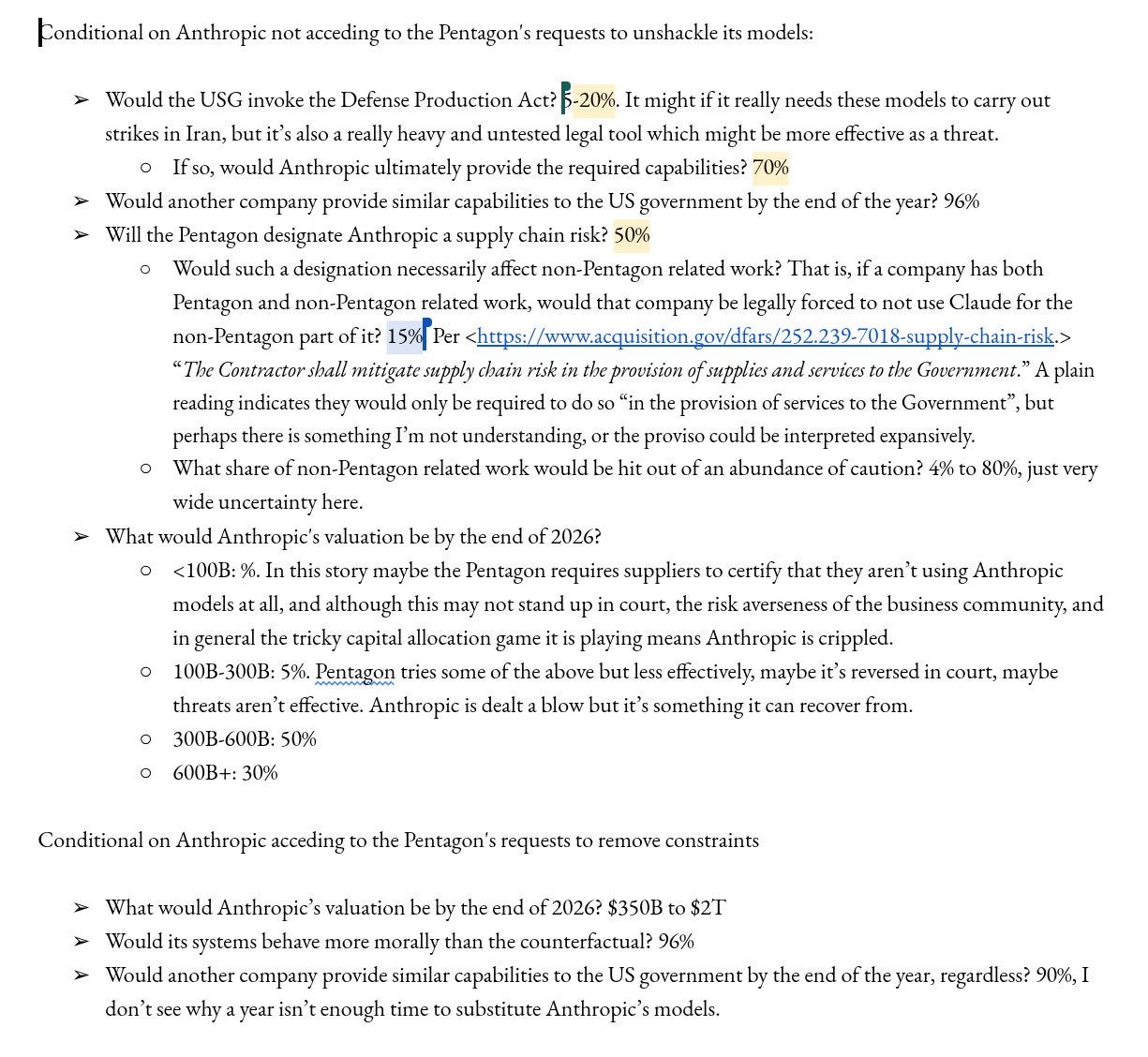

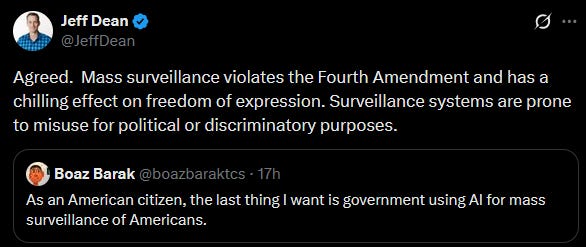

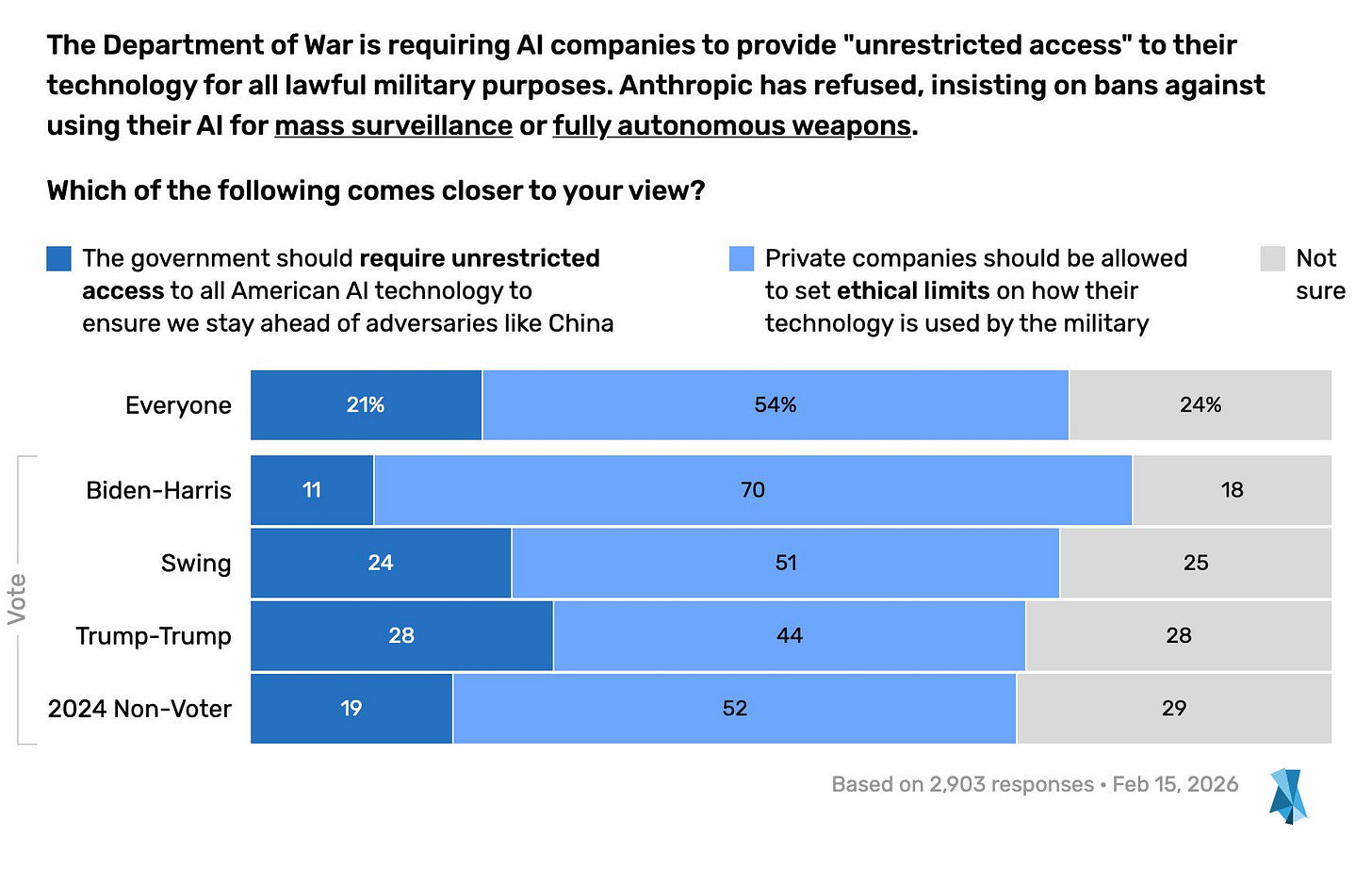

On Friday, the Pentagon declared AI company Anthropic a “supply chain risk”, a designation never before given to an American firm. This unprecedented move was seen as an attempt to punish, maybe destroy the company. How effective was it?

Anthropic isn’t publicly traded, so we turn to the prediction markets. Ventuals.com has a “perpetual future” on Anthropic stock, a complicated instrument attempting to track the company’s valuation, to be resolved at the IPO. Here’s what they’ve got:

Upon the “supply chain risk” designation, predicted value at IPO fell from about $550 billion to $475 billion - then, after a day or two, went back up to $550 billion. No effect!

A coarser yes-no Polymarket tells the same story:

The chance of Anthropic getting a $500 billion+ valuation in 2026 fell from 90% to 76%, before rebounding to 83%.

Why have the markets shrugged off this seemingly important event?

Partly it’s because Anthropic seems likely to win on appeal. Hegseth has said the government will keep using Anthropic for the next six months (undermining his case that they’re a national security risk) and has signed a substantially similar contract with OpenAI (undermining his case that their contract terms were unworkable). The prediction markets think the courts will be sympathetic:

But even in the 28% of timelines where the designation sticks, things don’t seem so bad. Secretary of War Hegseth originally tweeted that:

In conjunction with the President's directive for the Federal Government to cease all use of Anthropic's technology, I am directing the Department of War to designate Anthropic a Supply-Chain Risk to National Security. Effective immediately, no contractor, supplier, or partner that does business with the United States military may conduct any commercial activity with Anthropic.

Framed this way, the Pentagon’s actions sound devastating. Anthropic relies on compute to train and run its AIs. Most of this compute is in data centers owned by Amazon, Google, and Microsoft. At least Amazon and Microsoft have contracts with the US military. If they had to drop Anthropic, it would make it impossible for the company to stay a frontier AI lab.

But in their own blog post, Anthropic described the situation differently:

If you are an individual customer or hold a commercial contract with Anthropic, your access to Claude—through our API, claude.ai, or any of our products—is completely unaffected.

If you are a Department of War contractor, this designation—if formally adopted—would only affect your use of Claude on Department of War contract work. Your use for any other purpose is unaffected.

In other words, the “supply chain risk” designation only means that companies can’t use Anthropic products in their specific Department of War contracts. So if Amazon is doing 95% normal civilian cloud compute stuff, and 5% special government contracts, only 5% of their contracts are affected. This is trivial! Anthropic can keep all its compute and most of its business partnerships even with Department-of-War-linked companies!

The lawyers who weighed in seem to think that Anthropic’s interpretation of the law is correct, and Secretary Hegseth’s interpretation confused. In some situations, this might be cold comfort - how much does it help to be right about the law when the government is wrong? But in this case, it probably helps a lot. Amazon, Google, and Microsoft are all big Anthropic investors - each owns about a 10% stake - and have multi-billion dollar AI compute contracts. Together, the three tech giants must have at least $100 billion riding on Anthropic’s success. They also have good administration connections and great lobbyists, and even Hegseth isn’t stupid enough to pick fights with them all at once. So probably they send their lobbyists to have a talk with Hegseth about what the “supply chain risk” designation actually entails, Hegseth enforces the letter of the law, and Anthropic is barely affected. At least this is the story the prediction markets are going with:

In this best-case scenario, Anthropic’s downside is losing some government contracts that made up ~5% of its business, plus some other Department-of-War-contractor contracts that probably add up to another ~5%.

Against that, the upside is great publicity. Despite a lot of work and some controversial Superbowl ads, Anthropic had never before managed to overcome ChatGPT’s superior name recognition. But they seem to have finally done it: Claude went from #120 on the App Store in January, to #1 this weekend, apparently driven by people who heard about the Pentagon standoff and were impressed by their principled stance.

This could have been a mixed blessing - Anthropic was previously trying to stand out as a B2B company while letting OpenAI have the dubious honor of producing consumerslop. But early signs suggest they might be winning over some companies too. From a Reddit thread on the topic:

As someone who manages IT for a mid-size company, this is actually a big deal. We were evaluating both Claude and ChatGPT for internal use and the Pentagon thing was basically the tipping point for us. Not because we're government adjacent or anything, just because a company willing to walk away from a massive contract on ethical grounds is probably also going to handle our data more carefully than one racing to close every deal possible. The app store ranking makes sense to me.

Finance VP for a mid size tech, we’re moving completely away from ChatGPT/Copilot to Claude.

I’m impressed with the prediction markets here - they’ve taken a bold and counterintuitive stance that I wouldn’t have otherwise considered (that these developments barely harm Anthropic) and made it legible, to the point where I basically believe it.

The Midterms As Potential Crisis

America will hold midterm elections on November 3. Incumbents always have a hard time during midterms, and Trump’s approval rating is low, so it’s expected to be a good year for Democrats. Prediction markets expect them to win at least the House (80% chance) and maybe even the Senate (20 - 40% chance).

This simple story is complicated by two different Republican attempts to change voting law.

Republicans generally believe there is significant fraud in elections, especially immigrants voting illegally, and propose strict ID requirements to prevent this. Most Democrats believe fraud is rare, and that strict ID requirements are more likely to disenfranchise normal voters who don’t have the right forms of ID available. The latest flashpoint in this battle is the SAVE Act, a Republican-sponsored bill which would require voters to show a passport, birth certificate, or Real ID when registering to vote for the first time or changing their registration. It recently passed the House, but is on track to be filibustered by Democrats in the Senate:

At the same time, there are rumors that the Trump administration is working on an executive order to declare a national emergency and take control of elections. The order would say that foreign countries have been rigging US elections (some commenters speculate that maybe Maduro could be granted clemency for “admitting” to this), and respond with a series of extreme measures. These would include banning voting machines, restricting vote-by-mail, and requiring all voters to re-register before the election. For what it’s worth, Trump has denied all of this, although his previous denial of Project 2025 makes this less reassuring.

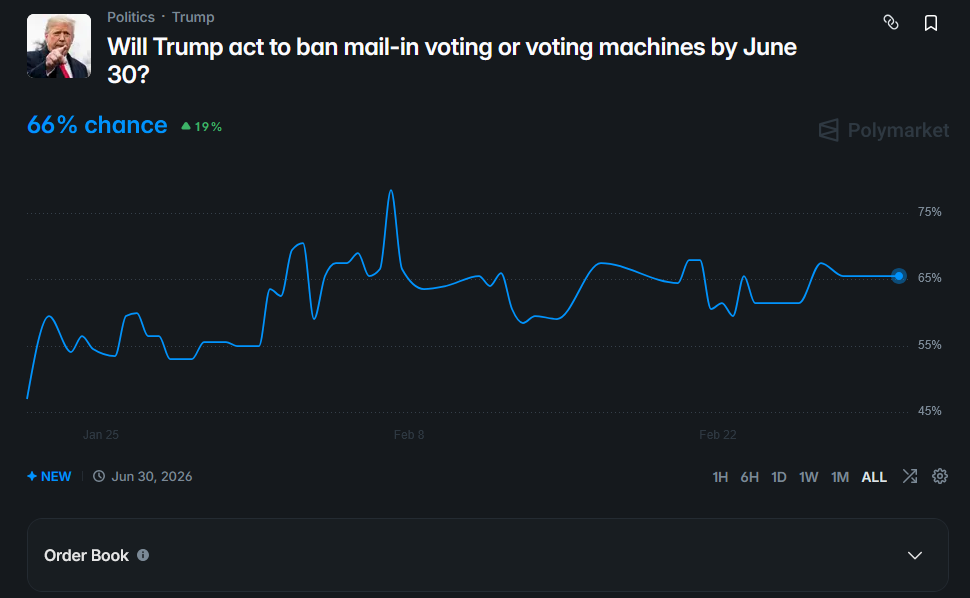

It looks like the markets are saying that Trump will try something, but maybe not the full executive order under discussion.

Most commentators think the EO is unconstitutional, with at least one liberal arguing that it would be good, since it would force the courts to explain exactly how illegal all of this is. But if it somehow made it through the courts, the most likely outcomes could be:

Chaos (at least according to the mostly-liberal commentators I’ve been reading). Do federal agencies really have the capacity to re-register every voter in the next six months (imagine the DMV lines!) Can precincts really switch from voting machines to secure paper ballots during that period? Is there enough supply of the special holographic paper that the order demands for ballots? If not, what happens? Is the election so borked that we can’t figure out who controls Congress? What happens then? At a minimum, lots and lots of court cases.

A blue wave. This would be a somewhat surprising result of Republican policies, but it makes sense. All of these restrictions select for high-information, high-motivation voters - people who hear about the new rules and get fired up enough to hunt down their birth certificate, march down to the DMV, wait on line for one million hours, and re-register. Due to their education advantage and the structural features of midterms, that probably favors Democrats. Democrats are more likely to own passports (one of the easiest forms of valid ID), and less likely to trigger increased scrutiny by having changed their name recently (because liberal women are less likely to marry and take their husband’s surname). First-order, a blue wave like this is good for the left. But second-order, if the above factors lead to some completely implausible blue wave that makes no sense by normal election standards, then Republicans could decide the elections were illegitimate and we’re back at chaos again.

Too many degrees of freedom: Do the Republicans understand the calculus above? One theory is that they plan to make up for it with degrees of freedom. There will be many small decisions about how strictly to enforce each rule, and maybe they’ll be lenient in Republican districts and strict in Democratic ones. The administration is trying to purge potentially fraudulent voters from the rolls - a process with obvious potential for abuse (purged voters can re-register to prove their non-fraudulentness, but this adds an extra layer of complication, so if mostly Democrats get purged, this overall decreases the Democratic voter base). If the administration finds some way to disproportionately disenfranchise Democrats - or if even if Democrats just believe they’ve done this - then Democrats might consider the election results illegitimate, and we would get - again - chaos.

However, courts seem to be blocking all of these measures (except the SAVE Act, which is unlikely to pass Congress). It’s hard to see a world where the really disruptive ones get through. What do the markets say?

This seems like a good sign that there won’t be mass voter disenfranchisement.

But Metaculus expects a 25% chance that martial law is declared?!

In every election he’s been involved in, Trump has either outright said he won’t accept a result that goes against him, or at least given mixed signals about this. In 2020, he took various extreme steps to overturn the election, including telling state officials to throw out ballots, demanding that the count be stopped, trying to get the Vice President to certify fake electors, and the January 6 protests. Will he try the same thing during the midterms? He might not care as much about elections where he’s not personally involved. Or he might use the same playbook, this time with a much more docile Republican party mostly purged of spine-havers like Mike Pence. If he tries this, probably Democrats will protest; if those Democratic protests become unruly, maybe he’ll declare martial law to shut them down. “Chaos” doesn’t even begin to describe this situation.

Maybe the best headline summary of election forecasting are the “free and fair” questions, but they’re hard to interpret.

A Manifold market with 25 forecasters gives a 41% chance that the elections aren’t considered “free and fair”. The resolution criteria is the opinion of international election observers and the mainstream media, who lean liberal. In the past, these observers have sometimes given the US a less-than-perfect verdict - for example, OSCE described the 2024 US election as:

While the general elections in the United States demonstrated the resilience of the country’s democratic institutions, the election process took place in a highly polarized environment. The election was well run, and candidates campaigned freely across the country with the active participation of voters. However, the campaign was marred by disinformation and instances of violence, including harsh and intolerant rhetoric. Repeated, unfounded claims of election fraud negatively impacted public trust.

…and they can probably find even more to complain about in a Trump-run election. Is this sufficient to create uncertainty around the resolution, and drop the probability to 40%? I’m not sure.

But Metaculus has a similar question noting that “This question may resolve as Yes [even] if the EAC, the OSCE, or the Carter Center notes only isolated problems or areas for improvement”, and it’s at 92%, which is reassuring.

I think the best summary of forecasters’ views on the midterms is that there’s a decent chance (~50%) Trump tries to change the rules around mail-in ballots, and a modest chance (~25%) he tries something more extreme - but that it probably won’t make much difference, the election will still be considered fair by international observers, and Democrats will still win.

I’m very interested in creating better prediction markets about the fairness of the 2026 elections. If anyone has ideas for how to do this, let me know.

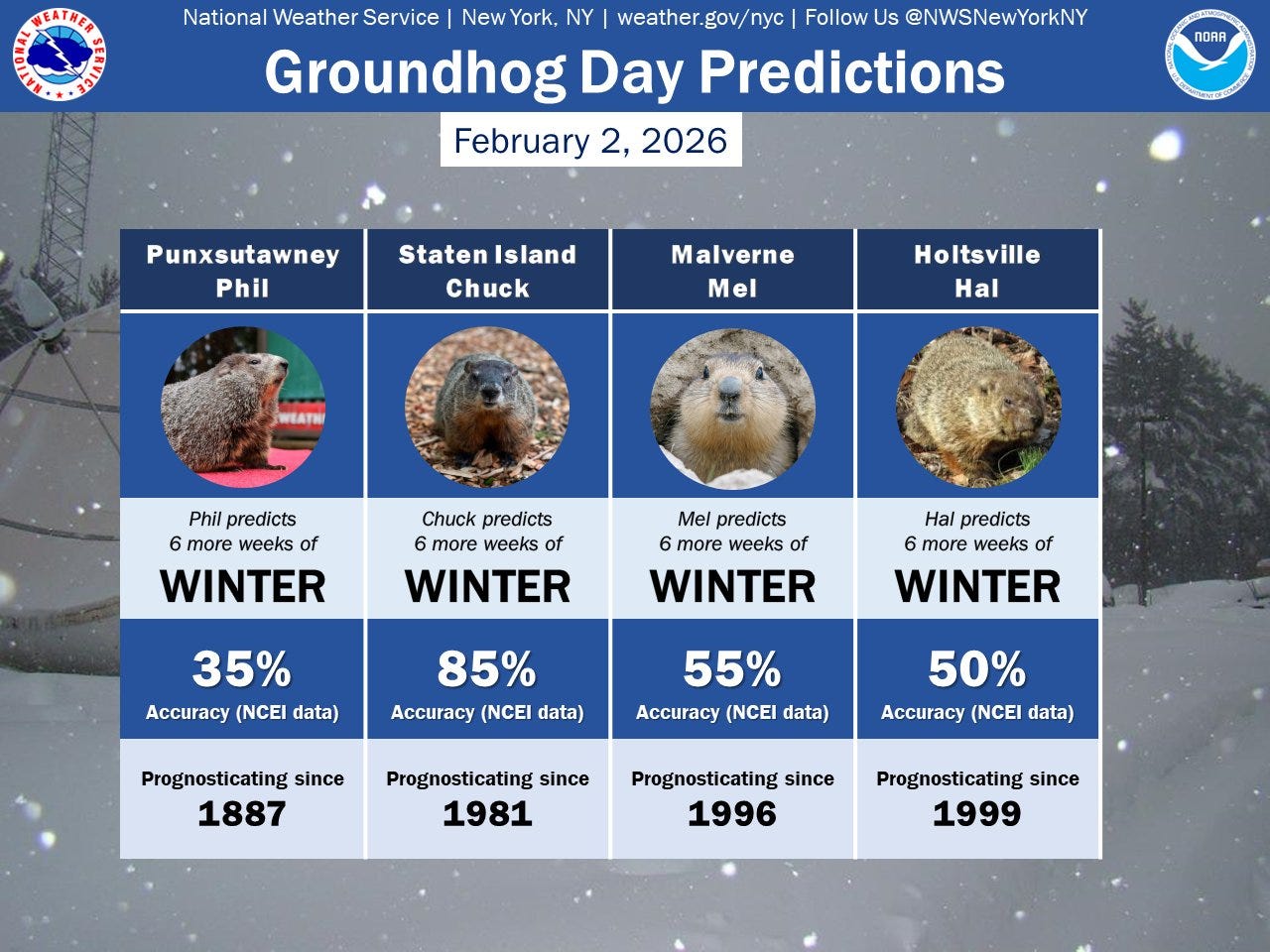

Groundhog Day

Tweeted by the National Weather Service’s New York City branch:

Punxsutawney Phil, the famous Groundhog Day groundhog, actually has less than 50% accuracy in predicting the length of winter. At what point do we flip the legend and say that there’s more winter if he doesn’t see his shadow?

But wait! Staten Island Chuck has an impressive 85% accuracy! The graphic says “since 1981”, which would imply 45 years of prognostication, but it looks like their source is this site, which only counts the last twenty years of data. That would also match the percent, since 85% of 20 is a round 17. In a separate analysis of 32 years, the Staten Island Zoo accords him an 81% success rate. That’s p = 0.0002 - plenty significant even after a Bonferroni correction for multiple magic groundhogs.

So is the groundhog legend true? Seems like it can’t be - the legend originated with Punxsutawney Phil, who does worse than chance. What kind of crazy Gettier case would we have to believe in to have the original magic groundhog be a fraud but, coincidentally, have another groundhog a few hundred miles away be actual magic?

A more prosaic explanation is that, according to this site, Staten Island Chuck is almost a broken clock, predicting spring on 25/31 occasions. If early springs are more common than long winters on Staten Island, that fully explains the phenomenon. It could equally well explain Mojave Max, the legendary anti-oracular tortoise of Las Vegas, who has managed a 20% success rate over decades on what ought to be a coin flip - he won’t stop predicting long winter, and is nearly always wrong.

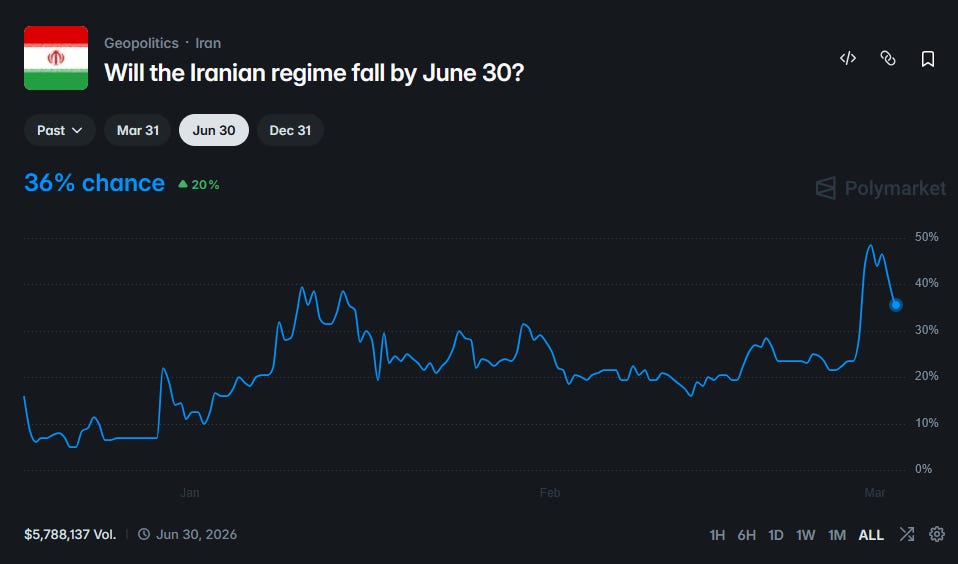

Iran Warcasting

Speaking of Groundhog Day, we’re bombing the Middle East again. Here’s what the markets have to say:

These two well-behaved markets agree on a somewhat less than 50-50 chance that the current round of airstrikes topple the Iranian regime.

Alireza Arafi, a hardline cleric with no distinguishing characteristics, is weakly favored to succeed Khameini as Supreme Leader. Other contenders include Khomeini’s grandson and Khameini’s son, and there is a 15% chance that they abolish the position before figuring out a successor.

The Strait of Hormuz is the waterway between Iran and Arabia that many of the world’s oil tanker routes pass through. Iran is already threatening traffic in the strait; if it threatened it more, it might be able to damage the global economy. This wouldn’t really help anything - Iran is part of the global economy too - but it would probably feel good to annoy the US a little more than they could otherwise do. Realistically this all comes down to the resolution criteria - Iran will certainly threaten the Strait, but probably can’t keep it 100% closed forever. The criteria here specify decreasing a seven-day moving average of traffic to below 20% of its usual level, which forecasters seem to think is more likely than not.

Manifold expects between 6 - 100 US casualties.

Polymarket thinks the war will be over by March 31, but…

…a Manifold market leaves some probability on it continuing until January (or perhaps restarting by then). Gotta say, I’m not seeing this one.

Reza Pahlavi is the heir of the Shahs of Iran. Polymarket thinks that if the current regime falls, there’s about a 40% chance they’ll reinstate the monarchy.

I found this Marginal Revolution post helpful in making sense of the markets’ view on Iran. America hoped that killing the Ayatollah would provoke mass protests and make the regime collapse. That doesn’t seem to have happened, and the regime seems ready to appoint a new Supreme Leader and keep going. America’s strategy will be to keep killing as many higher-ups as possible and bombing Iranian military sites, in the hopes that eventually the populace rises up or the remaining ayatollahs fail to hash out a succession plan. Iran’s strategy will be to just try to hold on, and cause enough pain for America and its allies that the US goes away sooner rather than later. Most likely America will either win or give up within a month, but there’s a long tail of outcomes with continued conflict until potentially as late as next year.

MNX

Stephen Grugett and Ian Philips of Manifold Markets have announced a new project, MNX.

MNX is a noncustodial cryptocurrency-based futures exchange offering financial products relating to AI, including some prediction-market-shaped ones. For example, ECI26 lets users place bets on the highest score that an AI will attain on the Epoch Capabilities Index by the end of the year.

Manifold is a great site, and I challenged Grugett on why he’s starting a new project. His answer: hedging. I didn’t transcribe all the details, but that’s fine, because Vitalik coincidentally wrote a pro-hedging manifesto last week.

Recently I have been starting to worry about the state of prediction markets, in their current form. They have achieved a certain level of success: market volume is high enough to make meaningful bets and have a full-time job as a trader, and they often prove useful as a supplement to other forms of news media. But also, they seem to be over-converging to an unhealthy product market fit: embracing short-term cryptocurrency price bets, sports betting, and other similar things that have dopamine value but not any kind of long-term fulfillment or societal information value. My guess is that teams feel motivated to capitulate to these things because they bring in large revenue during a bear market where people are desperate - an understandable motive, but one that leads to corposlop.

I have been thinking about how we can help get prediction markets out of this rut. My current view is that we should try harder to push them into a totally different use case: hedging, in a very generalized sense (TLDR: we're gonna replace fiat currency)

Prediction markets have two types of actors: (i) "smart traders" who provide information to the market, and earn money, and necessarily (ii) some kind of actor who loses money.

But who would be willing to lose money and keep coming back? There are basically three answers to this question:

1. "Naive traders": people with dumb opinions who bet on totally wrong things

2. "Info buyers": people who set up money-losing automated market makers, to motivate people to trade on markets to help the info buyer learn information they do not know.

3. "Hedgers": people who are -EV in a linear sense, but who use the market as insurance, reducing their risk.(1) is where we are today. IMO there is nothing fundamentally morally wrong with taking money from people with dumb opinions. But there still is something fundamentally "cursed" about relying on this too much. It gives the platform the incentive to seek out traders with dumb opinions, and create a public brand and community that encourages dumb opinions to get more people to come in. This is the slide to corposlop.

(2) has always been the idealistic hope of people like Robin Hanson. However, info buying has a public goods problem: you pay for the info, but everyone in the world gets it, including those who don't pay. There are limited cases where it makes sense for one org to pay (esp. decision markets), but even there, it seems likely that the market volumes achieved with that strategy will not be too high.

This gets us to (3). Suppose that you have shares in a biotech company. It's public knowledge that the Purple Party is better for biotech than the Yellow Party. So if you buy a prediction market share betting that the Yellow Party will win the next election, on average, you are reducing your risk.

(mathematical example: suppose that if Purple wins, the share price will be a dice roll between [80...120], and if Yellow wins, it's between [60...100]. If you make a size $10 bet that Yellow will win, your earnings become equivalent to a dice roll between [70...110] in both cases. Taking a logarithmic model of utility, this risk reduction is worth $0.58.)

See the tweet for more, including a suggestion that “the real solution [might be] to go a step further, and get rid of the concept of currency altogether”.

MNX will not be getting rid of the concept of currency altogether. Their vision of a hedge market relies on some more prosaic beliefs.

First, that Polymarket and Kalshi are doing a good job filling the gambling niche, Metaculus is doing a good job filling the information-aggregation niche, and hedging is the last prediction market niche capable of spawning a billion-dollar company. Actually, why set your sights so low? There’s currently two trillion dollars tied up in the derivatives market; a better hedge would be very lucrative.

Second, that hedging is about to enter a renaissance. Even sophisticated hedge funds only hedge a few types of risk, because nobody wants to spend hundreds of hours sculpting a hedge portfolio that catches 99.99% of possibilities and changing it every few days as the market shifts form. But if the Agent Economy Of The Future brings the cost of intellectual labor down near zero, then there’s no reason not to do that. If you invest in a seaside resort, your AI can figure out the chance of a hurricane, and of a tsunami, and of an oil spill, and of a thousand other things, and buy a tiny share of each on the prediction markets, and feel confident that you’re expressing your exact thesis (seaside resorts are good) separate from any acts of God that might disturb it.

Third, the past few years have seen dramatic advances in financial technology. Crypto traders have invented the perpetual future, a new instrument that tracks an asset without requiring anyone to own the asset involved. That means traders can buy and sell shares of SpaceX, OpenAI, and other nonpublic companies that won’t actually give you their shares. Hedging the price of nickel used to require someone somewhere in the process to own an actual warehouse full of nickel. Now you can skip that step.

(the other technological sea change is that this is possible at all. Five years ago, cryptocurrency prediction markets were too complicated. In the late 2010s, a group called Augur raised $5 million for the project but never managed to create usable software. FTX flirted with prediction-like contracts but never got them off the ground even with all their billions. Polymarket was the first to really solve this, making $10 billion in the process, but even they were barely usable in the early days. But Stephen’s making MNX with his own money and a team of 1-2 people. He benefits partly from the vibecoding revolution, and partly from all of the billions of dollars spent on improving cryptocurrency rails - MNX uses the stablecoin USDC).

MNX is focusing on AI for now, because it’s buzzy and there’s lots of money flowing into it. But if goes well, it could one day expand to seaside resorts, nickel, and everything else.

Elsewhere In Prediction Markets

1: CEO Chris Best reports that Substack is partnering with Polymarket to make it easier to embed prediction markets in Substack posts and notes. I haven’t been using the embeds here because they don’t let you see the history graph, but I’m excited about them in general. And his post also mentions that “one in five of Substack’s top 250 highest-revenue publications [has] started using [prediction markets]”, which surprises me but seems like a great sign.

2: Yahoo Finance: Man Bet Entire Life Savings Of $342,195 That Elon Musk Would Fail. This is more heartwarming than it sounds - it’s about economist Alan Cole and a Kalshi market about whether DOGE would successfully cut the federal budget by some amount. Cole was an expert in tax law and knew that the budget is sufficiently constrained that it was literally impossible to cut it that amount, and so (after getting his wife’s buy-in) put his entire life savings on NO. NO turned out correct, netting him a 37% profit after one year.

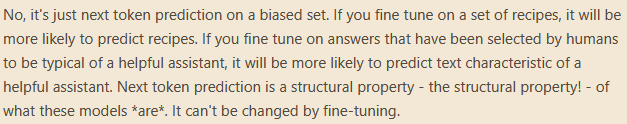

3: This Matt Yglesias tweet is more interesting than it sounds: